Archive for February, 2010

Six degrees of separation

Answering questions asked on the site.

Kathy asks:

I am developing a social network site in PostgreSQL and want to find out if two people are no more than 6 friends apart.

If your site grows popular, most probably, they are not. But we better check.

On most social networks, friendship is a symmetric relationship (however, LiveJournal is a notable exception). This means that if Alice is a friend to Bob, then Bob is a friend to Alice as well.

The friendship relationship is best stored in a many-to-many link table with a PRIMARY KEY on both link fields and an additional check condition: the friend with the least id should be stored in the first column. This is to avoid storing a relationship twice: a PRIMARY KEY won't be violated if the same record with the columns swapped will be inserted, but the check constraint will. The check constraint will also forbid storing a friend relationship to itself.

Let's create a sample table:

Read the rest of this entry »

Efficient circle distance testing

Comments enabled. I *really* need your comment

Answering questions asked on the site.

eptil asks:

I am using SQL Server 2008, but not the spatial features.

I have a table with few entries, only 40,000. There is an

id INT PRIMARY KEYcolumn and two columns storing a 2d coordinate, both decimals.I would like to find all the records that do not have other records within a given radius. The query I am using at the moment is:

SELECT id, x, y

FROM mytable t1

WHERE (

SELECT COUNT(*)

FROM mytable t2

WHERE ABS(t1.x - t2.x) < 25 AND ABS(t1.y - t2.y) < 25 ) = 1 [/sourcecode]

This is taking 15 minutes to run at times.Is there a better way?

Of course using spatial abilities would be a better way, but it is possible to make do with plain SQL. This will also work in SQL Server 2005.

In most database engines, the spatial indexes are implemented as the R-Tree structures. SQL Server, however, uses another approach: surface tesselation.

Basically, it divides the surface into a finite number of tiles, each assigned with a unique number. The identifiers of tiles covered by the object are stored as keys of a plain B-Tree index.

When SQL Server's optimizer sees a geometrical predicate against an indexed column, it calculates the numbers of tiles that possibly can satisfy this predicate. Say, if the tiles are defined as squares with side 1, the predicate column.STDistance(@mypoint) < 2 can only be satisfied by the objects within 2 tiles away from @mypoint's tile. This gives a square of 25 tiles with @mypoint's tile in the center. The tile numbers can be found and searched for using the index. Exact filtering condition is then applied to each candidate value returned by the index.

Same solution can be used in our case even without the spatial functions. Comparing tile numbers is an equijoin and hash join method is eligible for this operation. We can even choose the tiling algorithm individually for each query, since we don't have to store the tile identifiers in the table, and the hash table will be built dynamically anyway.

Let's create a sample table and see how it works:

Read the rest of this entry »

Matching sets: aggregates vs. first miss

Comments enabled. I *really* need your comment

From Stack Overflow:

Here is my schema:

Suppliers sid sname address

Parts pid pname color

Catalog sid pid cost I need to find the sids of suppliers who supply every red part or every green part.

This task requires matching the sets.

We need to compare two sets here: the first one is the set of the parts of given color; the second one is the set of parts provided by a given supplier. The former should be the subset of the latter.

Unlike other engines, MySQL does not provide the set operators like EXCEPT or MINUS that allow to check the subset / superset relationship very easily. We have to use the record-based solutions.

There are two ways to check that:

First miss

technique: test each record from the subset candidate against the superset candidate, returningFALSEif there is no match.Aggregate

technique: compare the number of records in the subset candidate to the number of records in their intersection. If the numbers are equal, the sets match

Let's test which way is faster in which cases. To do this, we will need some sample tables:

Read the rest of this entry »

Sargability of monotonic functions: example

In my previous article I presented a proposal to add sargability of monotonic functions into the SQL engines.

In a nutshell: a monotonic function is a function that preserves the order of the argument so that it gives the larger results for the larger values of the argument. It is easy to prove that a B-tree with each key replaced by the result of the function will remain the valid B-Tree and hence can be used to search for ranges of function results just like it is used to search for ranges of values.

With a little effort, a B-Tree can also be used to search for the ranges of piecewise monotonic functions: those whose domain can be split into a number of continuous pieces with the function being monotonic within each piece (but it may be not monotonic and even not continuous across the pieces).

In this article, I'll demonstrate the algorithm to do that (implemented in pure SQL on PostgreSQL).

I will show how the performance of simple query

SELECT * FROM t_sine WHERE SIN(value) BETWEEN 0.1234 AND 0.1235

could be improved if the sargability of monotonic functions had been implemented in the optimizer.

Read the rest of this entry »

Things SQL needs: sargability of monotonic functions

I'm going to write a series of articles about the things SQL needs to work faster and more efficienly.

With these things implemented, most of the tricks I explain in my blog will become redundant and I'll finally have some more time to spend with the family.

Ever wondered why a condition like this:

WHERE TRUNC(mydate) = TRUNC(SYSDATE)

, which searches for the current day's records, is so elegant but so slow?

Of course this is because even if you create an index on mydate, this index cannot be used.

The expression in the left part of the equality is not a mydate. The database engine cannot find a way to use an index to search for it. It is said that this expression is not sargable.

Now, a little explanation about the indexes and sargability. If you are familiar with these, you can skip this chapter. But beware that this chapter is the only one illustrated, so skipping it will make the article too boring to read.

Ahem.

To locate a record in a B-Tree index, the keys of the index should be compared to the value being searched for.

Let's consider this sample B-Tree index:

Tags in nested sets: efficient indexing

Comments enabled. I *really* need your comment

Answering questions asked on the site.

Travis asks:

I just read your excellent post: Hierarchical data in MySQL: parents and children in one query.

I am currently trying to do something very similar to this. The main difference is, I am working with data that is in a nested set.

I would like to construct a query that will return a resultset of nodes matched with

LIKE, the ancestors of each of these nodes, and the immediate children of each of these nodes.

This is a very interesting question: it allows to demonstrate the usage of three types of indexes usable by MyISAM tables: BTREE, SPATIAL and FULLTEXT.

Nested sets is one of two models that are most often used to store hierarchical data in a relational table (the other one being adjacency list model). Unlike adjacency list, nested sets does not require ability to write recursive queries, which SQL originally lacked and still lacks now in MySQL (albeit it can be emulated to some extent). It is widely used in MySQL world.

I described both methods and their comparison in the article I wrote some time ago:

The main problem with the nested sets model is that though it is extremely fast with selecting descendants of a given node, it is very slow in selecting ancestors.

This is because of the way the B-Tree indexes work. They can be used to query for values of a column within the range of two constants, but not for the values of two columns holding a single constant between them. One needs the first condition to select the children (found between the lft and rgt of the given node), and the second condition to select the ancestor (with lft and rgt containing the lft and rgt of the given node).

That's why selecting the children is fast and selecting the ancestors is slow.

To work around this, the sets that form the hierarchy can be described as geometrical objects, with the larger sets containing the smaller sets. These sets can be indexed with a SPATIAL index which is designed specially for this purpose and both children and ancestors can be queried for very efficiently.

Unfortunately, finding the depth level is quite a hard task for the nested sets model even with the SPATIAL indexes.

It would be quite an easy task is MySQL supported recursion: we could just run a query to find the siblings of each record by skipping their whole domains recursively.

However, MySQL's recursion support is very limited and it relies on the session variables, which are not recommended to use in the complex queries.

To cope with this, we need to mix the nested sets and the adjacency list models. Hierarchy will be stored in two seemingly redundant ways: the unique parent and the LineString representing the nested sets.

This will help us to use the R-Tree index to find all ancestors of a given node and also use B-Tree index to find its immediate children.

Finally, the question mentions using LIKE to find the initial nodes. LIKE predicate with the leading wildcards is not sargable in MySQL. However, it seems that the leading wildcards are only used to split the words. In this case, a FULLTEXT index and the MATCH query would be much more efficient, since FULLTEXT index allows indexing a single record with several keys (each one corresponding to a single word in the column's text), so a search for the word in the space separated or a comma separated list uses the index and is much faster than scanning the whole table.

Hence, the query would use all three main types of indexes: BTREE, SPATIAL and FULLTEXT.

To illustrate everything said above, let's create a sample table:

Read the rest of this entry »

SQL Server: EXCEPT ALL

Answering questions asked on the site.

myst asks:

I have two really large tables with lots of columns, many of them are nullable

I need to remove all rows from

table1which are not present intable2, but there can be duplicates in both tables and writing more than 70IS NULLconditions would be a pain, and I want to make sure there's nothing I'm missing.Is there a more simple way?

SQL Server supports EXCEPT clause which returns all records present in the first table and absent in the second one. But this clause eliminates duplicates and cannot be used as a subject to a DML operation.

ANSI SQL standard describes EXCEPT ALL which returns all records from the first table which are not present in the second table, leaving the duplicates as is. Unfortunately, SQL Server does not support this operator.

Similar behavior can be achieved using NOT IN or NOT EXISTS constructs. But in SQL Server, IN predicate does not accept more than one field. NOT EXISTS accepts any number of correlated columns, but it requires extra checks in the WHERE clause, since equality operator does not treat two NULL values as equal. Each pair or nullable columns should be additionally checked for a NULL in both fields. This can only be done using OR predicates or COALESCE, neither of which adds to performance.

But there is a way to emulate EXCEPT ALL in SQL Server quite elegantly and efficiently.

Let's create two sample tables:

Read the rest of this entry »

Index search time depends on the value being searched

Comments enabled. I *really* need your comment

Answering questions asked on the site.

Daniel asks:

I have a table which stores track titles in a

VARCHAR(200)field. The field is indexed, but searching for titles beginning with a letter Z is noticeably slower than for those beginning with A, and the closer the initial letter is to Z, the slower is the query.My understanding is that a full table scan occurs, but

EXPLAINshows that the index is used. Besides, the table is quite large but the query is still reasonably fast.Could you please explain this behavior?

MySQL stores its indexes in B-Tree data structures.

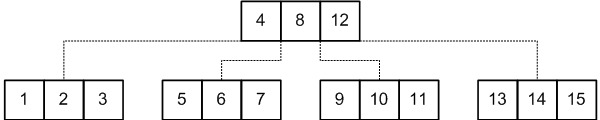

The Wikipedia link above explains the structure quite well so I won't repeat it. I'll rather draw a picture similar to the one in the article:

This picture is quite self-explanatory. The records are sorted in a tree order, so if you are searching for a certain value, say, 11, you, starting from the first page, should find the link to follow. To do this, you need to find the pair of values less than and greater than 11. In this case, you should follow the link which is between 8 and 12. Then you search for the next pair, etc, until you find your value or reach the end and make sure that your value is not there.

Following the links is quite simple, but how does the engine search for the values within one page?

This depends on how you declared the table.

MyISAM supports two algorithms for storing the index keys in a page: packed keys and unpacked keys.

Read the rest of this entry »

Searching for arbitrary portions of a date

Comments enabled. I *really* need your comment

From Stack Overflow:

I have a Ruby on Rails application with a PostgreSQL database; several tables have

created_atandupdated_attimestamp attributes.When displayed, those dates are formatted in the user's locale; for example, the timestamp 2009-10-15 16:30:00.435 becomes the string 15.10.2009 - 16:30 (the date format for this example being

dd.mm.yyyy - hh.mm).The requirement is that the user must be able to search for records by date, as if they were strings formatted in the current locale.

For example, searching for 15.10.2009 would return records with dates on October 15th 2009; searching for 15.10 would return records with dates on October 15th of any year, searching for 15 would return all dates that match 15 (be it day, month or year).

The simplest solution would be just retrieve the locale string from the client, format the dates according to that string and search them using LIKE or ~ operators (the latter, as we all know, searches for POSIX regular expressions).

However, this would be not very efficient.

Let's create a sample table and see:

Read the rest of this entry »

Join on overlapping date ranges

Comments enabled. I *really* need your comment

This post is inspired by a discussion with John Didion:

Is there any way to optimize the query for overlapping ranges in MySQL if both ranges are dynamic?

I have two tables, each with integer range columns (specified as

LineString), and I want to find rows that overlap.No matter what I try, the query planner never uses any indexes.

This question addresses a well-known problem of efficient searching for the intersecting intervals. The queries that deal with it require ability to search for the intervals (stored in two distinct columns) containing a constant scalar value.

Plain B-Tree indexes used by most databases do not speed up the queries like that. However, MySQL supports SPATIAL indexes that can index two-dimensional shapes and efficiently search for the shapes containing a given point.

With a little effort, time intervals can be converted into the geometrical objects, indexed with a SPATIAL index and searched for the given point in time (also presented as a gemetrical object). This is described in the article about overlapping ranges in MySQL.

The query described in that article, however, searches for the intervals overlapping a constant range, provided as a parameter to the query. Now, I will discuss how to adapt the query for a JOIN between two tables.

Let's create two sample tables, each containing a set of time ranges stored as geometrical objects, and find all records from both tables whose ranges overlap:

Read the rest of this entry »

Subscribe in a reader

Subscribe in a reader